The Category Has Changed

In 2026, Intelligent Video Analytics should mean AI-driven analytics. That distinction matters because the term still carries baggage from an earlier era of video surveillance, when analytics were often associated with motion detection, simple rules-based alerts, and systems that were more useful after an incident than during one. Security teams should expect more now. They should expect analytics that do more than flag movement, sort recorded clips, or make video footage easier to search later. They should expect systems that identify meaningful activity in context, reduce noise, and support real-time security decisions while an event is still unfolding.

That is the real shift behind modern Intelligent Video Analytics. The value is no longer limited to post-incident discovery. The value is increasingly tied to real-time understanding, which creates the conditions for faster deterrence, smarter escalation, and stronger response.

Why Older Analytics Often Fell Short

Many earlier video analytics deployments had some value, but they also created real frustration. A line was crossed. Motion appeared in a zone. An object remained in place for too long. In controlled conditions, those triggers could be helpful. In real operating environments, they often produced large volumes of nuisance alerts caused by weather, shadows, headlights, wildlife, or normal site activity that had little to do with actual risk.

That experience shaped how many physical security leaders still think about video analytics software. They remember systems that generated more workload than value, produced events without enough context, and behaved more like rough filters for recorded surveillance than true sources of operational intelligence. In too many cases, the outcome was a better post-incident discovery tool rather than a stronger real-time security capability.

AI Video Analytics Goes Beyond Motion Detection

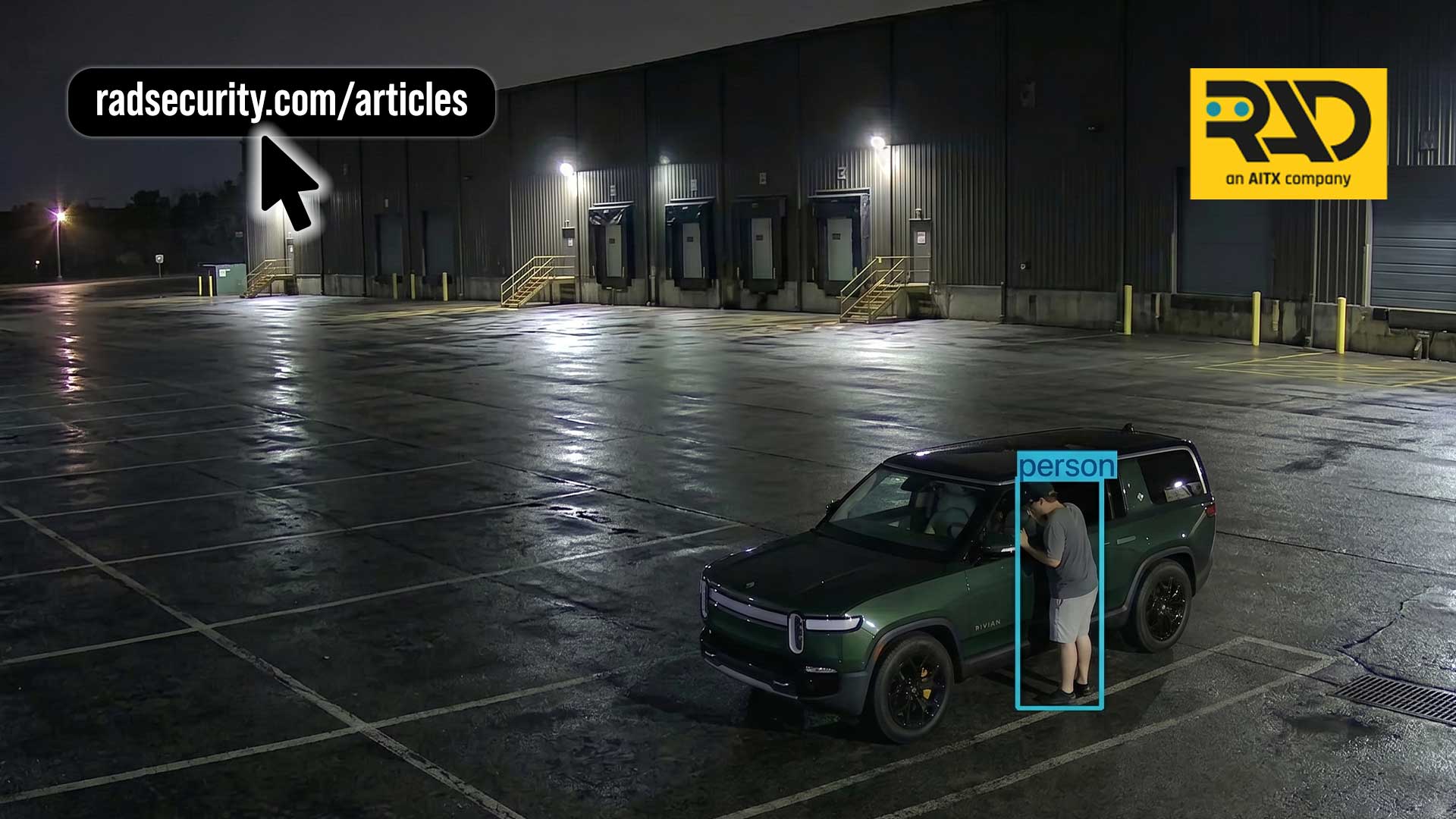

That is exactly where AI Video Analytics changes the conversation. AI-driven analytics is not simply about detecting that something moved in a scene. It is about understanding what moved, how it behaved, where it occurred, and whether the activity deserves attention from a physical security team.

Modern video analytics technology increasingly uses artificial intelligence, machine learning, computer vision, and deep learning to interpret video streams in ways that older surveillance systems simply could not. That means the system can distinguish people from environmental movement, separate vehicles from background activity, and recognize patterns of behavior that matter operationally. Instead of turning every change in a scene into an alert, it can focus on events that align more closely with actual security risk.

The Core Expectation in 2026

The baseline expectation for Intelligent Video Analytics in 2026 should be simple. It should improve the quality of real-time situational awareness.

That means security teams should expect analytics to identify meaningful activity more accurately than legacy systems, generate usable metadata, reduce false alarms, and support a clearer path from detection to action. It also means that Intelligent Video Analytics should no longer be treated as a narrow feature within a camera. It should be understood as part of a broader real-time security architecture that includes AI analytics, edge processing, cloud integration, and response workflows.

Why This Matters to Physical Security

For physical security teams, the difference is operational, not theoretical. A system that helps find footage faster after a perimeter intrusion still has some value, but it remains reactive. A system that identifies the person approaching a restricted area, recognizes the behavior as suspicious, and supports action while the event is still in progress changes the security outcome.

That is where the category becomes more important. Better analytics does not simply make the archive smarter. It makes the live environment more understandable. That, in turn, improves the speed, consistency, and relevance of security response. In the right deployments, the same AI-driven analytics that improves enterprise physical security can also support broader public safety objectives, but its most immediate value is often found in day-to-day site protection and faster operational response.

Human Detection Matters, but Context Matters More

Human detection remains one of the most important functions in AI-driven analytics, but it is no longer enough on its own. Most organizations no longer need to be convinced that detecting a person in a scene can be useful. The real question is whether the analytics can identify that presence in context.

A person walking through an active parking lot during the day may be completely ordinary. A person moving along the perimeter of the same site after hours is different. A person standing briefly near a gate may not matter. A person lingering, pacing, or returning repeatedly may signal something else entirely. This is why context matters far more than raw detection alone.

Behavior Detection Is Becoming a Core Requirement

Behavior detection is one of the clearest signs that Intelligent Video Analytics has matured. Security teams are no longer looking only for systems that can count, detect, or tag. They are looking for systems that can support behavior analysis and behavioral analytics in ways that help identify early signals of risk.

Loitering near a loading dock, repeated approach behavior near a fence line, after-hours presence in a normally inactive zone, or unusual stopping patterns near an entry point are all examples. These conditions may not always be incidents yet, but they often represent pre-incident behavior that deserves earlier attention. That is why behavior detection and behavior analysis have become far more relevant to physical security programs than simple motion alerts ever were.

Vehicle Analytics Has Become More Operationally Important

Vehicle-related events are another area where AI Video Analytics has become much more useful. In older deployments, vehicle-related analytics often focused on broad traffic awareness or post-event review. In modern physical security, vehicle analytics is increasingly tied to site control, perimeter protection, and risk identification.

That includes unauthorized vehicle presence, unusual idling, tailgating through controlled points of entry, parking violations in restricted zones, and suspicious activity near sensitive infrastructure. Vehicle tailgating and vehicle monitoring are strong examples of where analytics creates practical value, especially when combined with systems like AVA, which brings AI-driven awareness to access control and entry management.

License Plate Recognition Adds Valuable Context

License Plate Recognition remains one of the most useful analytics when it is tied to a real operational need. It can support access validation, watchlists, investigative review, and exception handling. It can also strengthen response when an alert is connected to a known or flagged vehicle. In these workflows, the ability to associate events with vehicles, license plates, and entry behavior adds a practical layer of context that many legacy surveillance systems never had.

Like any analytic, though, its value depends on how it is used. On its own, License Plate Recognition is just another data point. Used correctly, it becomes part of a more complete event picture that helps security teams understand who entered, when they entered, and whether the activity aligns with site policy.

Better Metadata Quietly Changes Everything

One of the biggest improvements in modern video analytics software is the quality of metadata it can generate. Video surveillance has always produced large volumes of footage. The challenge has never been whether the cameras could record enough. The challenge has been whether security teams could extract insight from all that video data fast enough to make it useful.

AI-driven analytics converts raw video streams into structured event data. That makes video footage easier to search, filter, compare, and investigate. It also makes real-time monitoring more effective because alerts arrive with context rather than as raw clips that still require interpretation from scratch.

Forensic Search Still Matters

None of this means post-incident discovery has become irrelevant. Better forensic search remains valuable. Faster access to relevant footage can reduce investigation time, improve reporting, support evidence management, and make security surveillance more useful overall.

However, in 2026 that should not be the main story. If Intelligent Video Analytics is framed only as a better forensic search tool, it undersells what AI-driven analytics can actually do. The more important story is that it can identify meaningful events in real time, which makes faster intervention possible.

Edge AI Is One of the Defining Changes

One of the biggest reasons Intelligent Video Analytics has become more relevant to physical security is the rise of Edge AI and edge computing. In traditional architectures, much of the analysis took place downstream. Video feeds were transmitted to centralized or cloud-based analytics platforms for review and processing. That model still has value, especially for enterprise reporting and retention, but it also introduces delay.

Edge-based analytics changes that model by moving processing closer to the source. When analytics are baked into the system, the device itself can interpret activity faster, reduce bandwidth dependence, and support quicker action. That is a major shift in how video analytics technology is being deployed.

Why Processing at the Edge Matters

The benefits of processing at the edge are practical. Lower latency improves responsiveness. Reduced reliance on constant upstream transmission helps in environments where connectivity is limited. Faster local analysis means the system can identify object detection events, behavior detection conditions, and threat detection scenarios while they are still active, not just after the video data has been archived.

That matters because security incidents unfold in real time. A person breaching a perimeter, loitering after hours, or moving through a restricted zone does not wait for a perfect workflow. The earlier the system can interpret the activity, the more options the organization has. Modern security cameras, especially AI security cameras with analytics at the edge, are becoming more than passive recording devices. They are increasingly part of the active security layer.

Deep Learning and NVIDIA Jetson Help Make This Practical

The rise of Edge AI in physical security has been enabled in part by major advances in embedded processing hardware. Systems built around accelerated edge compute, including platforms that use NVIDIA Jetson, make it possible to run deep learning models locally rather than pushing every event to the cloud first.

That matters because AI-driven analytics requires real compute. Running object detection, object classification, object recognition, behavior analysis, and threat detection in real time is very different from basic motion detection. It requires analytical capabilities that are supported by both strong models and sufficient local processing power.

This is one reason edge AI devices such as ROSA have become more relevant in modern security surveillance. They combine real-time AI analytics with on-device audio and visual response, which gives the site an active security layer rather than just another passive camera feed.

Cloud-Based Analytics Still Has an Important Role

None of this means cloud-based analytics is obsolete. Cloud integration remains important for centralized visibility, reporting, multi-site oversight, forensic search, and long-term retention. In many environments, the right architecture is not edge or cloud. It is edge where speed and local responsiveness matter most, combined with cloud-based analytics where scale, management, and broader coordination matter most.

This is increasingly how security operations centers think about the category. Edge-based analytics helps improve real-time awareness and local action. Cloud-based analytics helps unify reporting, search, event review, and operational consistency across multiple locations.

Video Management Systems Are Still Part of the Picture

Video Management Systems remain central to how many organizations run their surveillance systems and security surveillance environments, which means analytics cannot be treated as a silo. Intelligent Video Analytics has to work with the systems security teams already use to review events, investigate incidents, and manage video feeds across sites.

That is why integrations matter. Analytics should not create friction with the broader security workflow. It should improve it by making video data more usable, alerts more relevant, and investigations more efficient. Buyers should expect modern video analytics software to do more than generate alerts. It should fit into real operational environments built around security cameras, video management systems, and response procedures.

How Security Teams Are Using AI Analytics Today

One of the strongest ways to understand the category now is to look at use cases. Security teams are not adopting AI analytics because the phrase sounds modern. They are adopting it to solve specific operational problems.

Loitering Deterrence

Loitering is one of the clearest examples of where AI-driven analytics creates value. A person lingering near an entrance, loading zone, or parking area may not trigger a strong response in a legacy system. With modern behavior detection and behavioral analytics, that activity can be identified earlier and treated as a relevant condition instead of background noise. Loitering deterrence is a strong example of this shift, particularly when paired with ROSA or other edge AI devices that can respond at the scene.

Perimeter Protection and Perimeter Breaches

Perimeter protection is another major use case. AI-driven analytics can identify perimeter breaches, detect suspicious approach behavior, and improve how security teams monitor large outdoor environments. Perimeter intrusion is especially relevant in logistics, where yards, trailer lots, and facility boundaries can be difficult to cover consistently. RIO and ROAMEO both fit naturally into this conversation because they extend analytics and visible presence into wide-area or mobile deployments.

Vehicle Tailgating and Vehicle Monitoring

Vehicle movement remains a major operational concern at many sites. AI-driven analytics helps identify tailgating, unauthorized entry, and unusual vehicle behavior around gates, lanes, and restricted areas. Vehicle tailgating and vehicle monitoring show how analytics supports both site awareness and control. In logistics environments, this can be particularly important for BOLO-style vehicle monitoring and access review using analytics tied to vehicles and license plates.

Firearm Detection

Firearm detection is another example where AI-driven analytics helps security teams focus on events that are operationally urgent. In environments such as healthcare, where the speed of recognition matters greatly, analytics has to do more than record the event. It has to help surface the threat quickly enough to support deterrence, escalation, and protective action. ROSA is a natural fit here because it combines AI detection with edge deterrence at the scene.

PPE Compliance and Construction Security

AI analytics is also being used for PPE compliance, particularly in environments such as construction, where safety and security often overlap. PPE compliance is a good example of how analytics can support both operational oversight and evidence management. RIO is especially relevant in these environments because it brings mobile analytics and visible security coverage to infrastructure-limited sites.

Real-Time Data Should Lead to Real-Time Action

This is the central distinction that matters most. Traditional analytics often improved post-incident discovery. AI-driven analytics should improve real-time understanding. Once the system can identify meaningful activity in context and surface real-time data with enough accuracy, the security operation is no longer limited to a reactive model built around archived video footage.

At that point, the organization can do more than investigate what already happened. It can begin verifying events, issuing automated alerts, escalating incidents, and taking steps to influence the outcome while the incident window is still open. That is where the difference between passive surveillance systems and modern AI Video Analytics becomes most visible.

This Is Where Agentic AI Becomes More Valuable

That distinction also changes how security teams should think about agentic AI. If the analytics layer is weak, noisy, or low on context, then higher-level automation ends up functioning like a better triage or discovery tool. It may improve workflow, but it still spends too much effort sifting through noise.

When AI-driven analytics reliably identifies meaningful activity in real time, agentic AI can be focused where it creates the most value. It can verify events, support automated alerts, initiate deterrence, escalate according to policy, notify stakeholders, and coordinate real-time security response rather than acting primarily as a better post-incident discovery tool. That is also where organizations can reduce the risk of escalation into broader security breaches by acting sooner and with better context.

What Security Teams Should Expect Now

Security teams evaluating Intelligent Video Analytics should hold the category to a higher standard than they did in the past. They should expect AI-driven analytics rather than simple motion detection. They should expect stronger behavior detection, behavioral analytics, object detection, threat detection, and better metadata. They should expect edge-based analytics where speed matters, cloud-based analytics where scale matters, and real use-case relevance across physical security environments.

Most importantly, they should expect the system to improve more than search. They should expect it to improve how the organization sees, understands, and responds to meaningful events in real time.

Conclusion

In 2026, Intelligent Video Analytics should not be described as a slightly better version of traditional video surveillance. It should be understood as AI-driven analytics that brings together artificial intelligence, machine learning, computer vision, deep learning, edge computing, cloud integration, and stronger video analytics software to improve how physical security teams interpret and respond to events.

The category has moved beyond motion detection and many of the frustrations associated with earlier analytics. It still improves forensic search, evidence management, and post-incident discovery, but that is no longer the main story. The main story is that AI-driven analytics can identify meaningful activity in context and make real-time security response more practical, more consistent, and more effective.

That is the standard security teams should expect now. And that is where Intelligent Video Analytics becomes more than a video tool. It becomes part of a modern security operation.